New scrape process

February 22, 2020

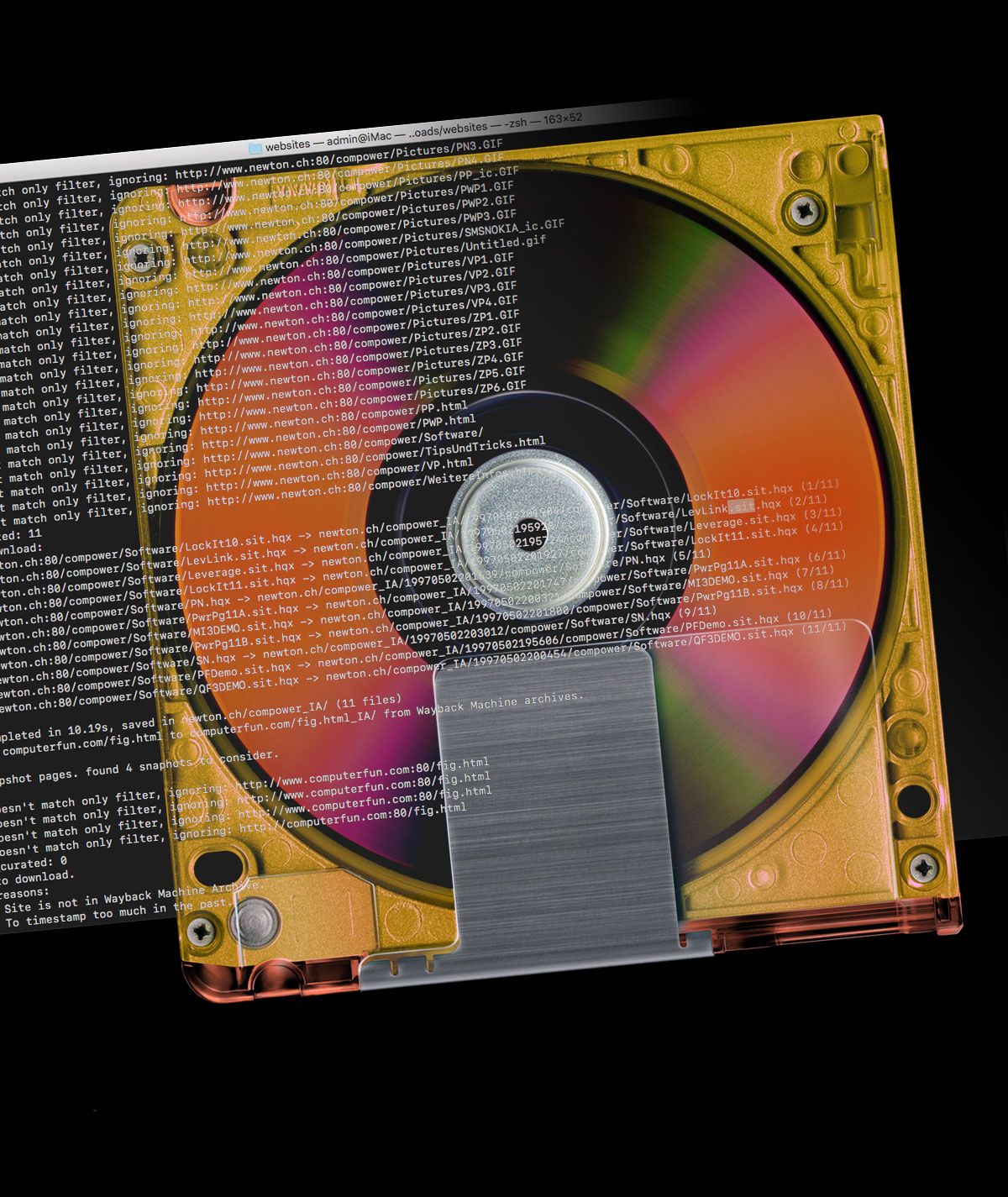

The script below takes advantage of a file populated with URLs as input (/path/to/domains.txt), replacing the $line with a URL one at a time from the said file. The first $line names the destination folder where downloads are saved, while the second instance queries the wayback machine for data.

URLs are read by a loop via an input file (input.file). The loop starts a shell built-in, while read -r line, referencing each URL in the input file. The loop then runs wayback_machine_downloader … “$line” command in the background against the URL input file. The final line done < input.file provides the file to the loop. The tee command logs the stdout to a text file for any post evaluation while allowing stdout to simultaneously display on screen.

while read -r line

do

wayback_machine_downloader -s -d "$line"_IA -t2001 -c3 --only "/\.(hqx|sit|sitx|dd|pkg|bin|sea|cpt)$/i" "$line"

done < input.file | tee -a log.txtYou can copy the commented script below and save it to a file as name_of_bash_script.sh file. After saving it be sure to run chmod +x name_of_bash_script.sh against it so that it can execute correctly. In the example below the -l flag is on which only lists file urls in a JSON format with the archived timestamps — it won’t download anything. The | tee -a log.txt was added to the tail of the command in the example script below so that the stdout is saved to a log.txt file.

Using the -l and piping the results to a text file is useful when a list of URLs is garnered through a database with information on Who.is domain name registration, hosting information and IP address information. Sites like pentest-tools.com or spyse.com can provide many URLs to scrape and it might be worth examining the output before committing. Existing compiled lists of URLs, that are preferably several years old, or a simple list found on github, can provide insights and potential targets to issue a wayback_machine_downloader scrape.

#!/bin/bash

# This script will use a file populated with a list of URLs as an input file and replace the $line with a URL.

# The file filled with URLs in this case is being read by the loop below, via input.file.

while read -r line

# The loop starts with while read -r line In this case, line will refer to each line (a URL) in your file.

# The loop then runs wayback_machine_downloader ... "$line", which means it's running the command against the URL line from your file.

do

wayback_machine_downloader -l -s -d "$line"_IA -c6 --only "/\.(pkg|as|hqx|cpt|bin|sea|sit|sitx|dd|pit)$/i" "$line"

done < /path/to/domains.txt | tee -a log.txt

# add the pipe tee command to the tail if you want a log file

# | tee -a log.txt

# The final line done < input.file is what's providing your file to the loop. You can replace input.file with the actual name of your file.

# After a download concludes, disclose the contents of the downloaded website and follow-up by selecting the disclosed folders and disclose once more. Then connect to local FTP and create a new folder in a destination directory. Finally, drag and drop only the contents of the last disclosed folders and move those into the new folder that was created — this is the destination folder. Moving the files through FTP will auto merge. You can also consider using Duplicate Detective app to remove duplicates and then use Find Empty Folders app to trash any empty folders.